If you work in a regulated contact center, you’re probably living through the tension of being dragged in opposite directions.

On one side, there’s intense pressure to “do more with AI” – faster, cheaper, at greater scale. On the other, your risk, compliance, and clinical or legal teams keep reminding you that one wrong answer is not just embarrassing; it can be unlawful.

That tension is now shaping how we frame our online search for solutions.

Questions like “what is the most secure contact center software?” or “what is the most compliant knowledge management software?” have stopped being abstract queries and started appearing in RFPs, board packs, and conversations with regulators.

Frustratingly, you will not find the answer in a generic features list. In healthcare, financial services, and utilities, “secure” and “compliant” are not marketing adjectives; they are engineering choices that show up in your governance model, audit trails, and AI guardrails. Evidenced by audit, not just claimed in a slide deck.

The Regulated‑Industry Reality: When A Wrong Answer Is Unlawful, Not Just Inconvenient

Picture the first Monday morning after a major regulatory or policy change goes live.

You’re sitting in the contact center listening to calls. Agents are using new scripts; supervisors are crossing their fingers. Customers are asking detailed questions about coverage, tariffs, or eligibility. Everyone hopes the guidance is correct. Nobody is entirely sure.

That scene plays out far more often than leaders like to admit.

Behind the glass, your “knowledge base” is still an uneasy patchwork of SharePoint folders, intranet pages, legacy PDFs, and personal “cheat sheets” built by agents to survive the day.

Under these conditions, questions such as:

- “What is the most secure call center software for healthcare?”

- “What is the most secure contact center software for member services?”

Are not really about telephony, routing, or workforce management. They are questions about whether you can trust the guidance your people and bots are using in high‑stakes conversations and whether you can prove it later.

Why “AI Plus Any Knowledge Base” Is A Dangerous Fantasy in Healthcare and Financial Services

One of the myths that has grown over the last few years is that you can simply point a large language model (LLM) at your knowledge estate and let it generate perfectly crafted answers.

In a regulated context, this is less a strategy than an experiment you run on your customers and your regulatory license.

LLMs are pattern‑matching machines. They will always produce an answer, even when the underlying content is inconsistent, outdated, incomplete or outright contradictory. That’s what hallucination looks like in practice – confident, well‑worded nonsense.

If your knowledge sources are:

- A “PDF graveyard” of conflicting versions.

- A collection of policy documents with no clear owner.

- A mix of formal rules and informal workarounds that are never reconciled.

Then “AI‑powered search” simply accelerates the spread of misinformation. It doesn’t matter how secure your infrastructure is if the answers themselves are wrong, untraceable, or impossible to defend in an audit.

So when people type “what is the most secure contact center software?” into a search bar, they are often asking the wrong question.

The better question is: “Which contact center solutions give us governed, expert‑approved answers we can stand behind when the regulator calls?”

Resist the myth. Invest in solutions that match your needs.

Why Your CcaaS Suite’s “Knowledge Module” Is Probably Not Enough

Here’s another myth. On paper, your CRM or CCaaS platform already “does knowledge” via an article library or FAQ engine.

So why add another solution into the tech stack? Here’s the answer:

In practice, these modules are rarely designed as compliance‑grade guidance layers.

They typically lack:

- End‑to‑end content lifecycle governance.

- Deep support for process‑centric, step‑by‑step workflows.

- The ability to act as a headless, single source of truth for every channel and bot.

They are excellent at managing data and basic content; they are less suited to orchestrating complex regulatory scripts, vulnerable‑customer journeys, or detailed member‑services scenarios in real time.

That’s why we now see a distinct category emerging: governed guidance platforms that sit alongside CRM and CCaaS, not underneath them. They provide the compliance‑first knowledge layer that feeds consistent, expert‑approved answers into whatever front‑end your customers and agents already use.

When healthcare and financial‑services leaders ask, “What is a good contact center solution for customer experience?” or “What is a good contact center solution for member services?”, the future‑proof answer is increasingly: A combination of your preferred CCaaS plus a governed guidance layer that keeps every channel honest and audit‑ready.

What Security and Compliance Really Mean in Knowledge‑Driven Contact Centers

From a regulated‑industry perspective, “secure” and “compliant” knowledge‑driven contact centers share three critical characteristics.

- Curation and delivery are deliberately separated: In a compliant environment, there is a clear line between how knowledge is authored, reviewed, and approved, and how it is delivered into live workflows. You can see which subject matter expert approved what, when, and how that content moved from draft to live guidance.

- Governance is embedded, not bolted on: Version control, approval workflows, and audit trails are not optional extras. Every change to guidance leaves a footprint: who changed what, when, and why. That’s what makes it possible to answer the regulator’s inevitable question: “How will you prove this guidance was accurate, current, and followed?”

- Compliance scenarios are treated as the norm, not edge cases: Scripts for regulated disclosures, vulnerable‑customer handling, member eligibility, and precise language around a product or benefit are not “nice‑to‑have” templates. They are core artifacts. The architecture is built assuming complexity and regulatory scrutiny are standard operating conditions.

In that world, the most secure contact center software is not defined by how many channels it supports out‑of‑the‑box. It is defined by how tightly it constrains AI to expert‑approved content, how transparently it evidences the guidance given, and how easily you can demonstrate that people and bots stayed inside policy.

Similarly, the most compliant knowledge management software is not the platform with the biggest library. It is the one that treats knowledge as governed infrastructure. With clear ownership, lifecycle control, and a design that assumes audits, investigations, and regulator enquiries are routine events, not rare exceptions.

How Governed Guidance Tames AI Without Losing Speed

The good news is you do not have to choose between “AI‑driven speed” and “governance‑driven control.” You can have both, but only if you are intentional about how AI and humans collaborate around a curated knowledge foundation.

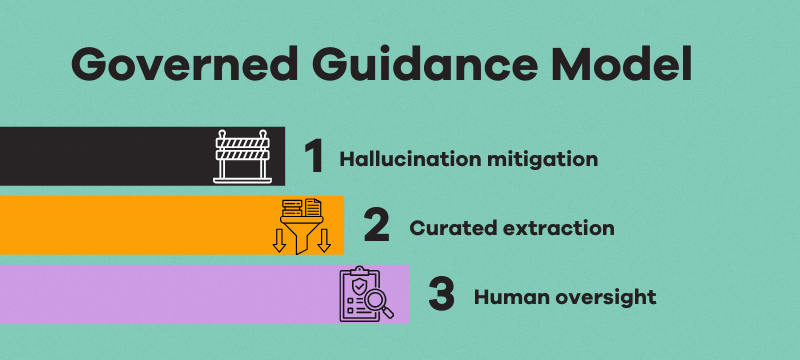

A governed guidance model does three things differently:

- Hallucination mitigation: AI is strictly constrained to a vetted knowledge base. Retrieval‑Augmented Generation and hard guardrails prevent it from answering outside approved content. If the answer isn’t in the corpus, the AI doesn’t improvise; it escalates.

- Curated extraction, not policy invention: Generative AI is used to summarize, extract, and re‑express approved content in context, not to invent new interpretations of policy. The model becomes a fast assistant, not an unsupervised policy designer.

- Human oversight with full auditability: Subject matter experts remain in the loop, piloting, testing, and approving new guidance before it becomes live. Every answer delivered whether to an agent desktop, an IVR, a chatbot or a self‑service page can be reconstructed and inspected.

In other words, AI becomes part of the governance model. It supplies velocity; humans provide judgment and accountability.

That’s the only sustainable way to reconcile board‑level ambitions for AI with regulator‑level expectations for control.

Five Questions Every Board‑Level Buyer Should Ask About “Secure” Contact Center Solutions

If you are evaluating contact center software, knowledge platforms, or AI assistants this year, you can simplify the conversation by asking five direct questions.

- How do you prevent AI from answering outside approved content? Look for explicit guardrails, not vague reassurance. If the vendor cannot describe their hallucination mitigation strategy in plain language, treat that as a warning sign.

- How do you evidence what guidance was given, and by whom, when the regulator asks? Ask to see audit trails in action. You should be able to reconstruct specific interactions, identify the guidance displayed, and show the version history behind it.

- How do you handle major regulatory or policy changes under time pressure? Explore how new or updated guidance flows from SME approval into every channel. The Monday‑morning‑after‑a‑rule‑change scenario is your practical test case.

- Can your governance layer operate headlessly across our existing stack? You should not have to rip and replace contact center infrastructure to get compliance‑grade knowledge. A credible solution will feed consistent guidance into your chosen CCaaS, CRM, chatbots, and portals.

- How does your platform support human‑AI collaboration at the desktop? Ask how the solution helps agents, especially new ones, work with AI safely: surfacing recommended steps, summarizing history, and keeping both humans and machines inside the guardrails.

If a potential partner struggles to answer these questions, it doesn’t matter how impressive their AI demo looks. You may be looking at a tool built for low‑stakes environments, not for the realities of regulated customer contact.

Where Panviva Sits In This Conversation

Within this emerging landscape, Panviva belongs firmly in the governed‑guidance camp. It is not just “another knowledge base,” but a compliance‑grade guidance layer purpose‑built for regulated, AI‑enabled contact centers.

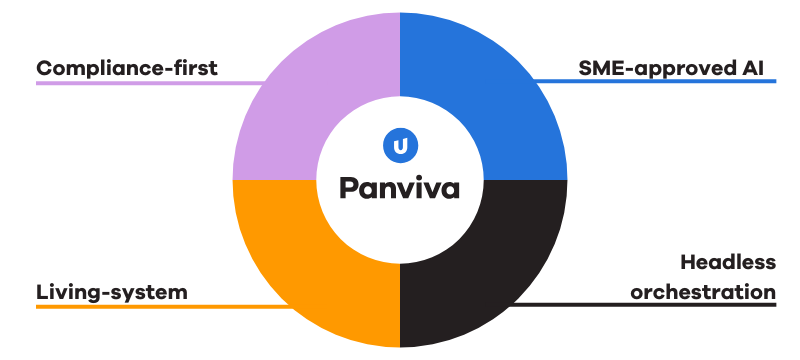

Its architecture reflects that origin story:

- Compliance‑first, process‑centric design for regulated disclosures, vulnerable customers, and complex procedures.

- Expert‑approved, governed AI that works inside formal workflows, with SMEs controlling what becomes live guidance.

- Headless orchestration into the CCaaS, CRM, and AI channels you already own.

- Living‑system capabilities that capture tacit expertise from experienced staff before it leaves the building, shortening time‑to‑competency for new cohorts.

In other words, Panviva gives you a concrete answer when someone asks, “What is the most secure contact center solution for our regulated environment?” It provides the governed knowledge layer your AI, your people, and your regulators can all live with.

Continue The Conversation: Webinar And Whitepaper

If this resonates, the logical next step is to deepen the conversation. Not just on technology, but on operating models.

In our upcoming webinar, Fiona, Nadine, and I will unpack:

- How healthcare and other regulated organizations are designing AI‑human partnerships around governed knowledge.

- How risk, operations, and technology leaders are working together to make AI “safe, fast, and worth the risk” in real contact centers.

- How to build an internal case for a governed guidance layer alongside your existing CCaaS and CRM investments.

If you want a fuller strategic briefing for your leadership team, you can also download the “Knowledge Under Pressure” whitepaper.

It explores why 2026 has become a tipping point for knowledge governance, AI, and regulated contact centers and why treating knowledge as governed infrastructure is now a competitive necessity, not a nice‑to‑have.

About the Author

Martin Hill-Wilson is a long-standing member of the CX and Customer Contact community and an experienced business and thought leader in customer strategy, design, and practice.

Over his career, he has held senior roles across consulting, BPO, and systems integration before establishing himself as an independent advisor, consultant, facilitator, and conversation host.